Medication Safety Risk Calculator

Assess Your Medication Safety Risk

This tool estimates your risk of medication errors based on key demographic factors and healthcare access. Results are not diagnostic and should not replace professional medical advice.

Your Medication Safety Risk Assessment

Risk Level:

Key Factors Affecting Your Risk

Disclaimer: This tool is based on population-level data from the article. Individual risk may vary significantly based on specific health conditions, medication regimens, and healthcare provider practices. It does not replace professional medical advice or safety protocols.

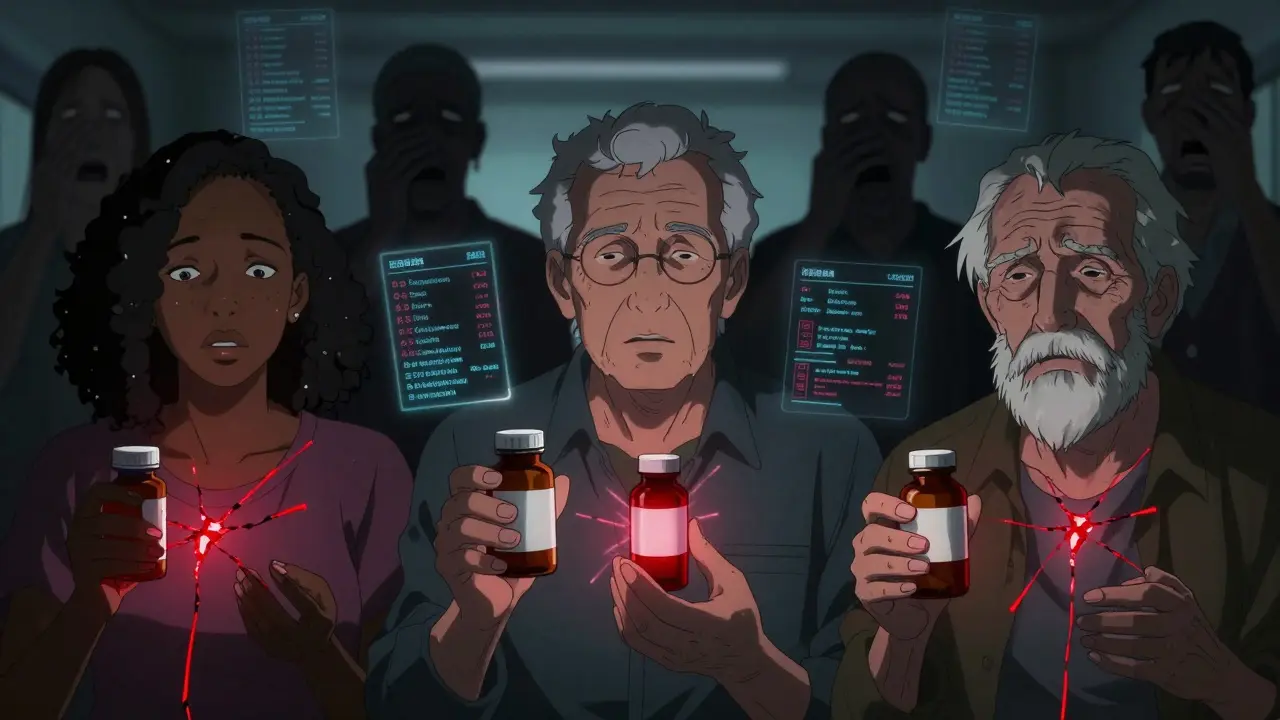

Medication errors aren’t random accidents. They’re often the result of broken systems - and those systems fail differently for different people. A Black patient in Atlanta, a Spanish-speaking elder in Los Angeles, or a low-income senior in rural Mississippi doesn’t just face the same risks as someone else. They face higher risks - and fewer protections. This isn’t about luck or individual mistakes. It’s about how healthcare systems, research, and policies have quietly left millions behind.

Why Some Patients Are More Likely to Be Harmed by Medications

Every year, over 42 billion dollars are spent globally because of medication errors. That’s not just money. It’s hospital stays, lost work, chronic pain, and sometimes death. But who pays the heaviest price? Research shows it’s not evenly distributed. Marginalized groups - Black, Hispanic, Indigenous, elderly, non-English speakers - are far more likely to experience harmful medication errors, yet far less likely to have those errors documented or acted upon.

Take reporting. A 2025 study across five NHS hospitals found that patients from white or Black ethnic backgrounds were more likely to have medication incidents recorded than those from other minority groups. Why? Not because they made more mistakes. Because they were more likely to be heard. Patients from other backgrounds often didn’t trust clinicians, didn’t know how to speak up, or were shut down when they tried. One analysis found African American patients in Georgia were less likely to have their pain concerns taken seriously - not because their pain was less real, but because of unconscious bias in how clinicians interpreted their behavior.

Clinical Trials Don’t Reflect Real Patients

When a new drug is approved, it’s supposed to be safe for everyone. But how do we know that? Through clinical trials. And those trials? They’re overwhelmingly white. Between 2014 and 2021, Black participants in FDA-approved drug trials made up just one-third of their share of disease burden in the U.S. population. That means we’re approving drugs based on how they work in a narrow slice of people - then giving them to everyone.

The results? Dangerous gaps. In 2021, the U.S. Preventive Services Task Force couldn’t set specific colorectal cancer screening guidelines for Black Americans - even though they have the highest death rates - because there wasn’t enough data from trials that included them. The same goes for blood pressure meds, diabetes drugs, and painkillers. If a drug was only tested on white men, we don’t really know how it affects women, older adults, or people of color. And that’s not a gap in science - it’s a safety flaw.

The Cost Barrier Is Deadly

Even when a safer, more effective medication exists, it’s often out of reach. In 2022, nearly 19% of Hispanic Americans and over 11% of Black Americans were uninsured. Compare that to 7.4% of white Americans. That gap isn’t just about income. It’s about access. A new drug that cuts heart attack risk by 40% means nothing if you can’t afford it - or if your insurance won’t cover it. And because people of color are more likely to be uninsured or underinsured, they’re forced into cheaper, riskier alternatives: over-the-counter meds, skipped doses, or no treatment at all.

This isn’t hypothetical. We’ve seen it happen. When a new asthma inhaler comes out, wealthier patients get it. Lower-income patients keep using outdated, less effective ones - and end up in the ER more often. The system isn’t broken. It’s working exactly as designed - for some.

How Bias Shows Up in Prescribing

Clinicians aren’t bad people. But they’re human. And humans carry bias - even when they don’t know it.

Studies show doctors are less likely to prescribe opioids for Black patients with the same level of pain as white patients. Why? A false belief that Black people have higher pain tolerance. That same bias affects other medications. Doctors may assume a Latino patient won’t understand complex instructions. Or that an elderly Asian patient won’t adhere to a regimen. So they under-prescribe, simplify too much, or skip critical safety checks.

The Agency for Healthcare Research and Quality (AHRQ) calls this out clearly: implicit bias isn’t a side issue. It’s a direct threat to medication safety. And it’s not just about race. It’s about age, language, education, and even how someone dresses. A patient who seems "uncooperative"? Often just someone who doesn’t trust the system - or can’t communicate.

Why Reporting Systems Are Failing

Hospitals track medication errors. They have apps, forms, hotlines. But those systems were built for a world that doesn’t exist anymore.

Most reporting tools assume patients speak English fluently. They assume patients know what counts as an "error." They assume patients feel safe speaking up. None of that is true for many.

One Reddit thread from a patient advocacy group described a woman who was given the wrong dosage because the hospital didn’t provide an interpreter. She didn’t speak up - she didn’t know she had the right to. No one reported it. She almost died. That incident? Never made it into the hospital’s safety logs.

And when reports do get filed, they rarely include demographic data. No one tracks if errors cluster among non-English speakers. Or if elderly patients on multiple meds are being missed. Without that data, you can’t fix the problem.

What’s Being Done - And What’s Missing

The World Health Organization launched "Medication Without Harm" in 2017. The goal? Cut severe medication errors by 50% in five years. It’s a strong framework. But here’s the catch: it doesn’t automatically include equity.

Only 86 of 194 countries have committed to it. And even among those, few have concrete plans to address disparities. In the U.S., only 32% of hospitals have formal programs to tackle medication safety gaps across racial or language lines - even though 78% say it’s a priority.

Some progress is happening. The Office of the National Coordinator for Health Information Technology launched a $15 million project in 2024 to build AI tools that scan electronic health records for patterns of disparity. Can the system flag when a patient with limited English is being prescribed a complex regimen? Can it alert staff when a patient is getting multiple high-risk drugs without proper monitoring? That’s the kind of real-time intervention we need.

The Joint Commission also made equity a formal patient safety goal. That’s huge. But goals mean nothing without action. Training staff in cultural competency? Check. Hiring more translators? Check. But what about redesigning discharge instructions so they’re visual, not text-heavy? What about community health workers who go door-to-door to check if patients are taking meds correctly? Those are the real solutions.

The Path Forward

We can’t fix this with more posters or better apps alone. We need to rebuild systems from the ground up - with equity as the foundation.

- Require diverse representation in clinical trials - not as a box to check, but as a safety requirement.

- Build medication safety dashboards that track errors by race, language, age, and insurance status - and make those numbers public.

- Fund community-based programs where trusted local leaders help patients understand their meds and speak up when something’s wrong.

- Replace "patient compliance" language with "shared decision-making" - because if a patient doesn’t understand or trust the plan, they won’t follow it.

- Train every clinician on implicit bias - and tie that training to performance reviews.

This isn’t about charity. It’s about safety. Medication errors kill. And they kill more often among the most vulnerable. If we don’t fix this, we’re not just failing patients - we’re failing the most basic promise of medicine: to do no harm.

Why are medication errors higher in minority communities?

Medication errors are higher in minority communities because of systemic barriers: lack of access to quality care, language differences, mistrust in healthcare systems, implicit bias from clinicians, and lower rates of insurance coverage. These factors combine to make it harder for patients to get the right medication, understand how to take it, or report when something goes wrong.

Do clinical trials include enough diverse participants?

No. From 2014 to 2021, Black participants in FDA drug trials made up only about one-third of their share of disease burden in the U.S. population. This lack of diversity means drugs are often tested on people who don’t represent the full range of patients who will use them - leading to unsafe or ineffective treatments for minorities.

How does language barrier affect medication safety?

Language barriers lead to miscommunication about dosage, side effects, and drug interactions. Patients may not understand warnings, skip doses, or take the wrong medication. Hospitals without professional interpreters see higher error rates - and those errors are rarely reported because patients feel unheard or ashamed.

Can technology help reduce medication safety disparities?

Yes - if designed with equity in mind. AI tools can now scan electronic health records to flag when patients with limited English or low income are prescribed complex regimens. Real-time alerts can prompt clinicians to offer translation services or simplify instructions. But technology alone won’t fix bias - it must be paired with training and policy changes.

What can hospitals do right now to improve medication safety for all patients?

Hospitals can start by collecting and publishing data on medication errors by race, language, and age. They can hire more bilingual staff and use visual aids instead of text-heavy instructions. They can train staff on implicit bias and involve community leaders in designing safety programs. Most importantly, they must listen to patients - especially those who’ve been ignored in the past.

Medication safety disparities are not incidental; they are structural. The data is unequivocal: marginalized populations face elevated risk due to systemic neglect, not individual failure. Clinical trial exclusion, language access deficits, and implicit bias in prescribing practices are not isolated phenomena-they are institutionalized. The WHO's "Medication Without Harm" initiative is a start, but without mandatory equity metrics tied to funding, it remains performative. We must treat demographic representation in research as a clinical safety imperative, not a diversity checkbox.

Oh, here we go again-the same tired narrative wrapped in academic jargon. Let’s be honest: if patients can’t follow simple instructions or refuse to engage with their care, that’s not a systemic failure, it’s personal responsibility. The notion that every adverse event is rooted in racism or classism is not only reductive, it’s dangerously infantilizing. Maybe instead of blaming clinicians, we should ask why so many patients distrust evidence-based medicine in the first place?

THIS IS A LEFTIST SCAM TO TAKE AWAY OUR MEDICINES AND GIVE THEM TO ILLEGALS AND REFUGEES WHO DON’T EVEN SPEAK ENGLISH

THEY’RE USING "EQUITY" AS A CODE WORD FOR SOCIAL ENGINEERING

CLINICAL TRIALS AREN’T DIVERSE BECAUSE NON-WHITES DON’T WANT TO BE GUINEA PIGS

AND IF YOU CAN’T READ A PRESCRIPTION YOU SHOULDN’T BE ON MEDS IN THE FIRST PLACE

THEY WANT TO FORCE TRANSLATORS AND AI ON HOSPITALS AND IT’S GOING TO COST BILLIONS

WE’RE PAYING FOR THIS AND IT’S NOT FAIR

So like… the AI tools they’re building to flag high-risk prescriptions? That’s actually kind of wild. Imagine if the EHR could auto-suggest a simplified regimen when someone’s on 7 meds and has limited English. No more "compliance" guilt. Just smart design. Also-visual meds charts? Yes. Text-heavy discharge papers? No. We’re still living in 2003.

Let me tell you something nobody else will-the real issue isn’t race or language, it’s the collapse of the social contract between patient and provider. In the 1980s, your GP knew your family, your job, your dog’s name. Now? You’re a 14-minute slot in an EHR with a 300-word note and a barcode. The system doesn’t see you as a person, it sees you as a risk profile. That’s why the Black patient in Atlanta and the elderly Spanish speaker in LA both get the same cold, transactional treatment. It’s not bias-it’s dehumanization. And you can’t fix dehumanization with training modules. You need to rebuild the entire architecture of care from the ground up. And yes, that means paying nurses more, hiring community liaisons, and shutting down the corporate hospital model that treats people like data points.

This is one of the most important public health discussions we’ve had in years. The data is clear, the solutions are known, and the moral imperative is undeniable. We have the tools: AI-driven disparity detection, community health worker networks, visual medication guides, and culturally competent training. What we lack is political will. I urge every healthcare administrator reading this to prioritize equity metrics in your annual reports. Not because it’s trendy-but because lives depend on it. Change is possible. It starts with accountability.

It’s not just about medication errors-it’s about epistemic injustice. When a patient’s lived experience is dismissed because it doesn’t align with clinical heuristics, we are engaging in a form of intellectual violence. The assumption that "non-compliance" equals "non-adherence" ignores the reality that many patients are systematically silenced. Who gets to define "correct" medication behavior? The doctor? The algorithm? Or the person who actually has to swallow the pill, manage the side effects, and navigate the insurance labyrinth? We must center patient epistemology. Not as an add-on. Not as a diversity initiative. As the foundational principle of pharmacovigilance.

Y’all need to stop overcomplicating this. We’ve got churches, barbershops, and community centers in every neighborhood. Put trained volunteers there with simple visual guides and bilingual flipcharts. Let them check in on folks weekly. No apps. No EHRs. Just human connection. I’ve seen it work in Lagos-why won’t we do it here? It’s not about tech. It’s about trust. And trust doesn’t come from a grant proposal. It comes from showing up.

Pharmacovigilance frameworks are fundamentally flawed when they fail to stratify adverse event data by sociodemographic variables. The absence of granular reporting renders any safety initiative statistically invalid. Without disaggregated data on race, language proficiency, insurance status, and zip code-level income, we are operating in a state of epistemic opacity. This is not a moral failing-it’s a methodological catastrophe. The Joint Commission’s equity goal is meaningless without mandatory, auditable data collection protocols.

Why are we spending millions on AI when we could just deport the illegal immigrants who are overusing the system? They’re the ones clogging ERs with wrong prescriptions and no insurance. We’re not talking about equity-we’re talking about welfare fraud. Stop giving free meds to people who don’t even pay taxes. This isn’t healthcare reform-it’s a redistribution scheme.

I’ve worked in rural clinics for 12 years. The most effective thing we did? Hired a local grandmother who spoke Spanish and Choctaw to walk patients through their meds every Tuesday. No tech. No training modules. Just someone they trusted. That’s the model. Not AI. Not policy. People. Real people who show up, remember names, and don’t rush.

Actually the data shows that medication error rates are higher among white rural populations due to opioid overprescribing and lack of pharmacy access-so your entire premise is flawed. Also the 2025 NHS study you cited had a sample size of 472 patients from only two hospitals. That’s not statistically significant. And you forgot to mention that Hispanic patients have lower error rates than Black patients in controlled studies. You’re cherry-picking. Also your punctuation is inconsistent. You wrote "Black Americans" with a capital B but "white Americans" with a lowercase w. That’s inconsistent orthography. Fix it.

Look I get it you wanna fix stuff but why are we always blaming doctors? I’ve been to the ER three times and every single time the nurse was nice. Maybe the real problem is patients don’t read the damn leaflets. I mean come on. If you can’t understand your prescription maybe you shouldn’t be on it. Also why are we spending money on AI when we could just make the pills bigger and put pictures on them?

Did you know that the CDC secretly uses AI to track medication errors and then suppresses the data because they don’t want the public to know how bad it really is? I mean think about it-why else would they not publish the full breakdowns? And why are all the trials funded by Big Pharma? It’s a cover-up. The real solution? Abolish the FDA and let people buy meds off the dark web. At least then you’d know what you’re getting. Also I heard they’re putting microchips in the pills now to monitor compliance. That’s why they want you to use apps. It’s all connected. The government. The hospitals. The algorithms. They’re watching. And they’re not telling you.